How to not be fooled by fake science

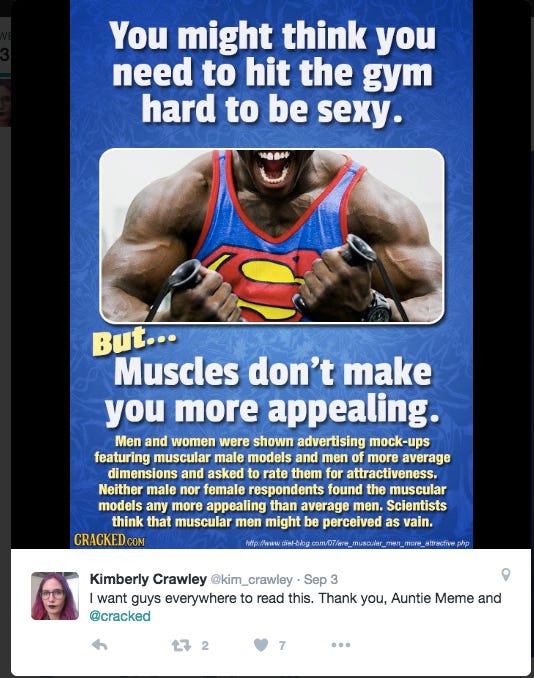

A couple months ago, a dating coach I follow on Twitter retweeted this meme from Cracked.com:

I was immediately intrigued- this seemed to contradict both my personal experience and what I understood to be the scientific conensus on male physical attractiveness. Then again, this guy has to know what he’s talking about, right?

So I took what was, to me, the obvious course of action: I looked up the article referenced in that meme. And I read it. And then I read the articles it referenced, and the articles they referenced. And a few things became clear.

First, the dating coach in question isn't great at fact-checking his sources. This meme turned out to be pure misinformation.

Second, neither is most anyone else. Not the originator of this tweet, nor any of the people commenting on the tweet. Not "Auntie Meme" at Cracked, the creator of this picture, nor the commenters at Cracked.com. And most of all, not the professional journalists and fitness “experts” whose crappy articles I ended up having to read through.

Third, checking sources can be a lot of work. The article referenced in that meme wasn’t an original source- it was a blog article. In fact, I had to get through several layers of blog and magazine articles to dig up the actual studies being referenced, and in one case even had to look a study up on Google Scholar.

This wasn’t a simple matter of cliking a link and reading an article. No, this was an EPIC QUEST FOR THE TRUTH. It was harrowing, dangerous, but above all else, infuriating. And in the process, I would have to confront a most implacable foe: fake science.

The Diet Blog Saga

It started out innocuously enough. Sitting down to my Macbook, I typed in the address provided in the Cracked meme. I fully expected to find something wrong with the meme’s caption, both because it went against everything else science tells us about attractiveness, and because it’s fucked Cracked.com. But I figured that, at worst, it would be one flawed study, or even a good study that had been cherry-picked. Hell, maybe I was even about to find out that the scientific consensus, as well as my own experience, were wrong.

I found myself facing, not the text of an original study, but an article on a health website called Diet Blog. The first thing I noticed was that the article begins by immediately citing a study that found that muscular men are, in fact, more attractive.

Immediately I felt a sinking feeling in the pit of my stomach. This was shaping up to be a far, far more perilous journey than I had foreseen- I was entering a dark and foreign land, one filled with bullshit volcanoes, poisonous links, and the marauding uruk-hai of un-science.

After quickly making myself a cup of English breakfast tea to steel myself for the journey, I collected my thoughts and forged onward. The study referenced at the start of the Diet Blog article was conducted at UCLA, published in Personality and Social Psychology Bulletin, and referenced by about twenty other articles.

In other words, it was highly credible. And it roundly contradicted Cracked’s assertion that women don’t care about muscles. Also, it turned out to be not one study, but three studies conducted by the same team and published together in a single article.

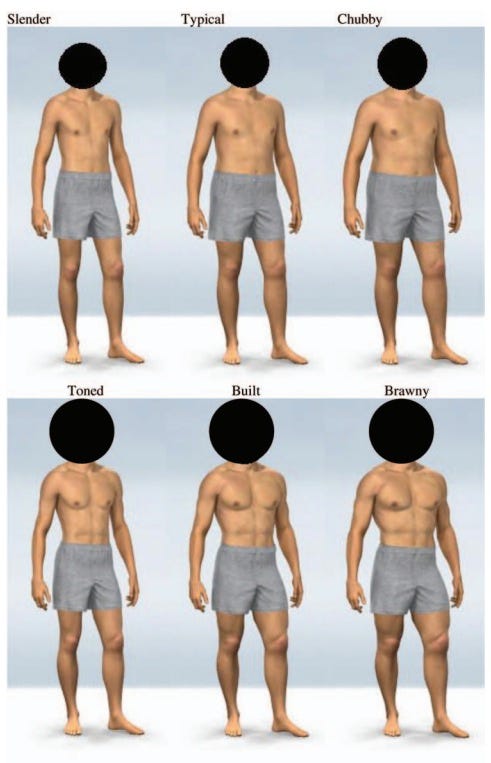

In fact, these three studies found that women consistently rated more muscular men as more attractive, up to a point- there was such a thing as too much muscle. Women in the study reported their casual sex partners were more muscular than their long-term partners, and more muscular than the average man. Finally, more muscular men reported having more sex partners than less muscular men.

The built and toned guys were rated most attractive, followed by the brawny guy. Built guy is slightly favored for casual sex and is viewed as more dominant; toned guy is viewed as a better boyfriend. Chubby guy comes in dead last.

So far, everything I had seen had directly contradicted the thesis that women don’t like muscular guys. But the article wasn’t over- the next headline, titled “The alternate view: Not all women like muscles,” promised to provide some counter-evidence. Okay, thought, maybe this article won’t be so bad after all. As it turns out, I’m too optimistic for my own good.

This section of the Diet Blog article described another study from Flinders University in Australia, which found that women were just as attractive to average-bodied men as they were to muscular men. Here the article provided two links- one to the study itself, and one to a magazine article about the study.

Naturally, the first thing I wanted to do here was read the abstract of the study. Like the other journal article, this turned out to be a series of five small questionnaire studies combined into one. Also like the first journal article, it doesn’t support the premise of the Diet Blog article- four of the studies found that women prefer more muscular men. The fifth didn’t study body type at all, but did find that a perfectly symmetrical man was less attractive than a slightly asymmetrical one- potentially challenging scientific orthodoxy, but on a totally different topic.

Oh, and also these studies were performed at Cambridge, not Flinders University. Somehow “Jim F” at Diet Blog managed to lie about where it was conducted too. It was around this point that I became so enraged that I feared I would burst a vein, so I took another quick break to meditate and make myself some chamomile tea before plunging back into the slimy morass of bullshit in this article.

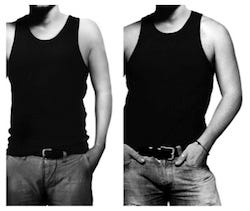

Next up I took a look at the magazine article on Stuff, a New Zealand news website, supposedly written about the “Flinders University” study. The first thing that jumped out at me was the stock image of a bodybuilder used in the article- it turned out to be just a stock image, not one taken from the study. The second thing that jumped out at me was that this article wasn’t actually about that study at all- it was about a totally different study from the University of Queensland, Australia.

Two things to note: first, that photo isn’t from the study. It’s just a stock photo picked by the author of this article. Second, the title and opening paragraph both make it sound like muscular men were found to be less attractive, which they weren’t.

These are two of the images that were actual used in the Queensland study.

Amazingly, this article couldn’t even be bothered to link to the journal article it was talking about. I had to look up the original study on Google Scholar. As it turned out, this study did find that average-bodied men did just as well as muscular men- at fashion modeling. Specifically, it found that advertisements featuring muscular men were no more effective at selling clothes than ads featuring average-bodied men, and that looking at average-bodied men made both male and female subjects feel better about their own bodies compared to looking at muscular men.

So, two big conclusions here- one about body image, and one about how to design an effective clothing ad. However, you may notice that this study wasn’t about attractiveness at all.

Got all that? Here’s a recap of the whole story:

1. The UCLA researchers conducted three studies, all of which found that muscular (again, up to a point) men were more attractive than men with average, skinny or chubby body types. They published all three studies in a single article.

2. The Cambridge team did five studies. Four of these studies again found that muscular men were more attractive than other guys. The last study wasn’t comparing body types at all, but was looking at the effects of symmetry. Like the UCLA team, the Cambridge team published their studies all in one article.

3. The Queensland team conducted and published a study on advertisements featuring male models of various body types. They found that ads featuring muscular men and average-bodied men were about equally effective in selling clothes, while ads featuring average-bodied men lead to more positive body image in both male and female subjects. Again, this study was not about attractiveness at all.

4. Stuff magazine writes an article about the third study. It doesn’t quite lie outright, but the title and opening paragraph both misleadingly imply that the study found muscular men to be less attractive.

5. Diet Blog produces a big article that supposedly reviews the evidence for and against muscular guys being more attractive. This article correctly cites the UCLA study and even explains the conclusions. However, it completely lies about the conclusions of the Cambridge study. It also cites the Stuff article about the Queensland study while lying about the findings of that study. Most bizarrely, it misattributes both of these articles, describing them as both being from some study conducted at Flinders University. The Diet Blog article describes the evidence as mixed. In fact, out of the nine studies being referenced, seven found more muscular men to be more attractive, and the other two were asking different questions altogether. This is willful dishonesty on the part of Diet Blog.

6. Auntie Meme at Cracked.com then makes and publishes a meme photo which cites the diet blog article. Rather than repeating Diet Blog’s assertion that the evidence is mixed, this meme selectively quotes the part about the Queensland study to suggest that muscular men aren’t more attractive. Again, this is willful dishonesty by Cracked.com.

7. Both the Diet Blog article and the Cracked meme are shared and commented on by thousands of people who can’t be bothered to check the sources being cited. Looking at the comments on both articles, as well as on Twitter, nobody- I mean absolutely nobody- has pointed out that the articles in question are misrepresenting their sources.

8. Most damagingly, the meme gets shared by experts in the dating and fitness fields- and I know it was shared by a lot more people than just the ones I saw. These experts not only put this misinformation in front of a wider audience, they also add credibility to it.

Me after reading through all this crap.

This is a particularly bad case of scientific misinformation, but believe it or not, this isn’t even as bad as it gets. At least here, the original studies appear to have been honest. But all it took was two websites lying about the contents of those studies, and thousands of lazy readers- people who “fucking love science” as well as experts who should know better- helped put that misinformation in front of hundreds of thousands of eyeballs.

A lie can run round the world before the truth has got its boots on. - Terry Pratchett, The Truth

How the media lies to you about science

Use of the term “proven"

Scientists rarely utter words like proof, proven, or other terms that similarly imply a high degree of certainty, because individual studies don’t prove anything. In fact, there’s a strong chance that any given study will be contradicted by other studies. So scientists will instead use terms like “suggests” or “strongly indicates,” to acknowledge the uncertain nature of scientific discoveries.

That’s why good studies need to be replicated. Individual studies don’t prove anything- instead they just suggest or indicate things. Only the consensus of the available scientific evidence should ever be viewed as “proof.”

Cherrypicking articles and studies

Did you know that a recent study showed that sugar isn’t unhealthy? And another one showed that running could shorten your lifespan? Granted, dozens of previous studies have shown the opposite- but hey, this study’s new. That means it’s better, right?

This assumption that newer studies are better than old ones is often used as an excuse to cherry-pick articles that go against conventional wisdom. Again, the preponderance of scientific evidence is what matters here- one outlier study proves nothing, especially if it can’t be replicated. If you see a headline about one study that upends the conventional wisdom on something, odds are you’re seeing cherry-picking in action.

Misleading use of the word “significant”

In common parlance, significant means something like “big enough to make a noticeable difference.” But in statistics, it has a totally different meaning- it means that an observed study result is almost certainly (with greater than 95% confidence) not just the product of random sampling error.

An observation can easily be statistical significant while also being so small as to have no practical significance, especially if the study has a large sample size. Studies will usually use a term like “practical significance” or “clinically significant” to indicate that an observed result is big enough to have practical importance.

When studies and articles use the term “significant” on its own, they’re pretty much always referring to statistical significance, which does not imply practical significance. Always look at the actual magnitude of the number.

Flat-out lying about the conclusions of a study

You know, like Diet Blog and Cracked. But here’s another example of how this works:

Suppose a study comes out that shows that when pregnant women are exposed to a certain class of chemicals, it can decrease the amount of testosterone their unborn sons are exposed to in the womb. Suppose that this chemical is used in chemicals like plastics, floor cleaners and adhesives, in similar quantities to what was used in the study. Suppose as well that this class of chemicals can also be found in chicken, but at quantities several orders of magnitude lower than what was studied.

Now obviously if a gram of phthalates lowers your testosterone, it doesn’t follow that a milligram will do the same. And of course lower testosterone doesn’t automatically mean a baby will be born with micropenis. No responsible organization would ever tell women that eating chicken will cause their sons to be born with micropenis.

PETA, on the other hand, did just that. And that’s how “Exposure to large quantities of plastic lowers testosterone” becomes “eating any amount of chicken can cause micropenis.” Flat-out lies like this one happen all the time, and the culprit isn’t always an obviously biased group like PETA- regular news organizations aren’t above lying to produce attention-grabbing headlines.

Misstating the implications of a study

A while back, a study came out which showed that marathon runners have slightly higher levels of LDL cholesterol and arterial plaque than sedentary people. Leaving aside the fact that this is just one study, and assuming the results of the study are accurate, does it imply that running is bad for your health?

Not exactly. The same study also found that marathon runners had healthier bodyweights, higher HDL (good) cholesterol, lower blood pressure, lower resting heart rates, and healthier insulin levels than sedentary subjects. 17% of sedentary subjects had type 2 diabetes; none of the runners did.

Elevated levels of LDL cholesterol are loosely correlated with heart disease, and arterial plaque certainly isn’t good- but on balance, the marathon runners were still healthier. As this study attests to, there is growing evidence that marathons and other endurance sports are a bit of a mixed blessing for your health, and shorter, more intense forms of exercise are better for you. But still, marathons aren’t bad for you- that’s an example of trying infer too much from one specific bit of data.

Misrepresenting what the study was even studying

We saw an example of this in the story above- in the study on advertising and body image. The study found that advertisements featuring muscular men were no more effective than advertisements featuring average-bodied men, and also that looking at average-bodied men made both men and women feel better about their own bodies versus looking at muscular men.

Media articles about the study either stated or implied that the study had shown women to be more attracted to averaged-bodied men than muscular men. But in fact, the study didn’t look at sexual attraction at all; only body image and the perceived effectiveness of advertising.

Misleading statistics

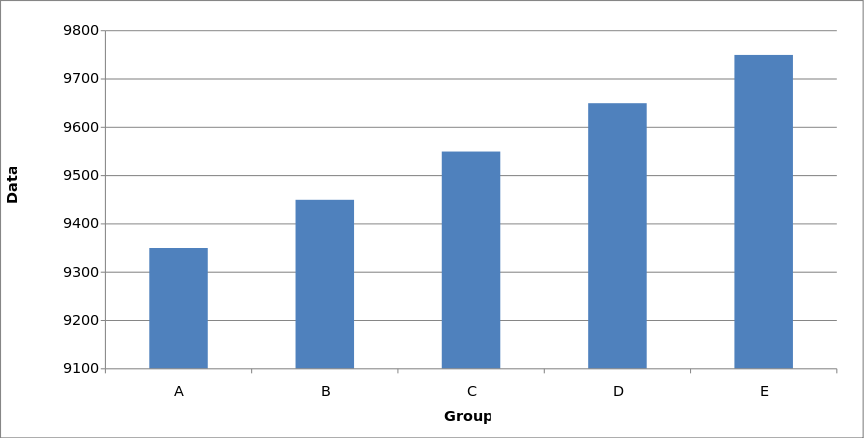

There are many ways to cite misleading statistics, but I’ll focus on the three most common. First we have the misleading graphic:

Source: Wikipedia. Yes, they have a whole article on misleading graphs.

Graphs like these are designed to make tiny statistical effects look significant. They’re frequently used by political groups to cite misleading economic statistics.

Second, we have numbers provided without anything to measure them against. Suppose I said that the U.S. economy added a million jobs this year, or smoking deaths are down by five thousand compared to last year- are those big numbers? Without historical data to measure those changes against, you have no way of knowing.

And third, we have percentages provided without absolute numbers. For instance, eating two strips of bacon a day might raise your risk of colorectal cancer by as much as 18%. That sounds like a lot, except that the odds of getting colorectal cancer sometime in your life are bout 5%- which means two strips of bacon raises those odds to less than 6%.

Also, colon cancer isn’t nearly as deadly as, say, lung cancer, particularly if caught early. Also, whether or not you have a family history of colon cancer is about ten to twenty times more important than weather you eat bacon. Also, you can make up for that bacon by also eating more vegetables. That 18% statistic just isn’t very scary once you’ve explored the data.

Use of the term “controversial"

Vaccination is controversial- some people think it causes autism. Quantum physics is controversial- some people think it proves that magic and psychic powers are possible, but others disagree. Creationism is controversial. Scientific racism is controversial. Crop circles are controversial.

One thing all of these controversies have in common: the entire scientific community lines up on one side of them. None of these controversies are between scientists and other scientists- they’re between scientists on one side, and wizards, militia groups, and Jenny McCarthy on the other.

Could the entire scientific community be wrong? Sure. It’s unlikely- after all, the scientists are basing their arguments on evidence, not just the fact that they have PhDs-but they could be. Regardless, it is dishonest and unethical to present issues as if the mere fact that they’re the subject of disagreement means that the truth of the matter is in serious doubt. This kind of reporting is called false balance, and it’s just another form of sensationalism- one aimed at making arguments seem more exciting by propping up the losing side. "Controversial" is a weasel word that the media uses to perpetuate debates that were actually settled long ago.

Mindlessly repeating shit they heard without checking it

As in, what these two people did when they tweeted that Cracked meme, promoting me to write this article. This is both the most mundane and by far the most common way that the media deceives you about science. For every journalist who writes an article that deliberately deceives the reader, several others will write articles citing that first article. They usually won’t realize they’re spreading misinformation; they just don’t care enough to check their facts.

And that’s just the media- here’s how scientists themselves mislead you

When I outlined this article, I wasn’t planning to beat up on anyone in particular. But when I was looking for examples to cite, I realized that there’s one famous study that makes just about every mistake in the book. Take a quick guess as to which study I’m talking about, then read on. Hint: it’s a diet study.

Again, misuse of “significant”

Just as the media tends to conflate statistical significance with practical significance, scientists sometimes do as well. In some cases they may honestly not understand the difference. More often though, they’re deliberately equivocating as a ploy to get their study featured in the news.

Also, remember that “statistically significant” really means “we’re 95% certain there’s a real correlation here.” That 5% chance of being wrong is going to come up again in a few minutes...

Cherry-picking data points to draw conclusions from

Where the media cherry-picks studies, studies themselves sometimes cherry-pick data points to base their conclusions on. One high-profile example of this is The China Study, a famous book by T. Colin Campbell which purports to prove, based on population data from various regions of China, that eating animal products is the cause of nearly all “diseases of civilization,” such as diabetes, heart disease, and cancer.

One of the many, many problems with this book is that it completely ignores many populations, both inside and outside of China which subsist largely on animal products and yet are healthier than populations eating vegan or nearly-vegan diets. The book doesn’t offer any explanation for these populations; it simply ignores them in favor of focusing on assertions that vegans are healthier, on average, than meat-eaters. And that’s a problem because...

Failure to account for confounding variables

What if I told you that people who drink soda live people than people who don’t? It sounds crazy, but it’s true. Or it could be, depending on which population you’re looking at. North Koreans who drink soda live longer than North Koreans who don’t.

Of course, anyone who hasn’t been living under a rock knows that there are three types of North Koreans- the rich, people who are starving to death, and those who are already dead. Soda drinkers may live longer, but that’s probably due to also having food to eat, and access to medical care- not drinking soda.

At this point I should note that the population studied in The China Study consisted of villagers in rural China in 1983- in other words, impoverished people in an undeveloped country, just like in our soda example.

That’s precisely why good scientists always stress the importance of controlling for extraneous variables. It’s why The China Study should have controlled for factors like smoking and alcohol consumption.

In fact, when it comes to diet, it’s almost impossible to isolate just one change. If you reduce your protein consumption by 25 grams a day, you’re not just eating less protein- you’re also eating 100 fewer calories a day, or else you’re eating more carbs and/or fat to make up those calories.

If you give up hamburgers, you’re cutting out several things: beef, bread, ketchup, pickles, mustard...that’s a lot more than one change. And yet, countless studies and popular media articles observe that people get healthier when they stop eating at McDonald’s, and bizarrely jump to the conclusion that cutting out meat is the reason for the improvement.

This is a huge obstacle to effective diet research, and good diet studies always have to take pains to factor out possible confounding variables. It’s also yet another reason why it takes many studies to shift the scientific consensus on a given subject- because different studies using different methodology can have different confounding variables, multiple studies agreeing with each other are one good way of ruling out the influence of confounding variables.

P-hacking

Okay, remember a while back when I said that “statistically significant” really only means “we’re 95% confident that this correlation is statistically significant?” That 95% is called a p-value. Some studies actually use higher p-values- like 98%, 99%, or even higher.

A 5% chance of being wrong sounds pretty good- that means you’d need to find 20 correlations, on average, for one of them to be illusory. But do you know how many variables you have to look at to find 20 correlations? Just five. Five variables gets you 5x4=20 correlations you can look at, once of which will probably be an illusion.

Now look at how this calculation scales up as you add more variables. Double your data set to 10 variables, and you have 10x9=90 correlations to look at, meaning you can expect four or five of them to be illusory. The number of correlations- and thus, opportunities for error- rises exponentially as you add more data.

The China Study found more than 8,000 statistically significant associations by studying 367 different variables across a population of 6500 subjects. This massive amount of data is usually touted as a plus- it’s not. Let’s do the math: 367x366=134,322 correlations that were calculated. And 5% of that number is 6716.

You read that right- statistically, as many as 80% of the statistically significant correlations found by The China Study are likely to be the product of random chance.

This practice is variously known as p-hacking, data fishing, or data dredging, and it’s a surefire way to make sure your study doesn’t come up empty-handed. Crunch enough numbers, and you’re sure to find something. The honest thing to do, of course, is to set the bar for statistical significance higher, maybe even above 99%- but not everyone is honest.

This practice fools a lot of people, because there’s a tendency to assume that more data is better. But there’s a distinction to be made between having more subjects, i.e. a larger sample size, and looking at more variables. Having a larger sample size lowers the risk of error while also allowing smaller correlations to be picked up- it’s a good thing. But looking at more variables is a double-edged sword- it expands the study’s potential to uncover interesting and useful data, but also allows more (and possibly exponentially more) opportunities for error.

Drawing overly general conclusions from very specific data

Okay, so what about the other 20% or so of the results from the China Study that aren’t random noise? What do they tell us?

The study did find highly significant associations between consumption of casein- one of the two proteins found in milk- and a variety of cancers. This association holds up quite a bit better than the associations found with other forms of animal protein, and casein has in fact been implicated in a number of digestive, inflammatory and autoimmune disorders by other studies.

However, The China Study infers from this association that all animal protein is to blame- even though the data only implicates casein. In fact, the data is more specific than that: since the study was done in China, it technically only implicates Chinese dairy. The possibility that the problem is/was specific to China- something in the soil, or the particular way they processed dairy- shouldn’t be ruled out based solely on the data found in The China Study.

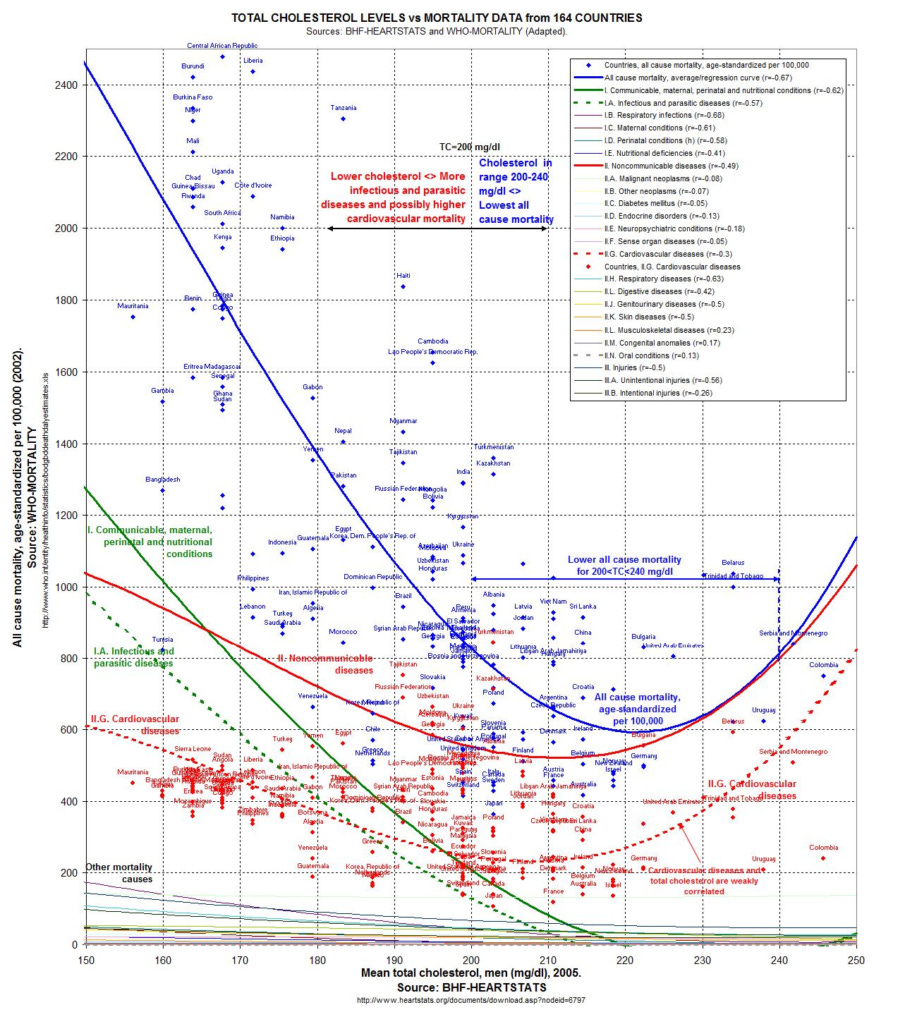

As another example: it’s well-known that high cholesterol is associated with a greater risk of heart disease. Most people take that to mean that high cholesterol is bad. But what if low cholesterol was associated with a different set of diseases- say, strokes and some forms of cancer. What then?

In fact, that’s precisely the case- low cholesterol can be as bad as high cholesterol, and it turns out that the ideal cholesterol level for minimizing all-causes mortality is in the “borderline high” range between 200 and 240 mg/dL.

Graph source: Perfect Health Diet. Original data taken from WHO statistics

And we’ll head back to the science of sexual attraction for one final example. Multiple studies have found that women generally don’t find bodybuilders very attractive. I have no doubt that that’s true- bodybuilders have more muscle than is physically possible without using massive doses of steroids.

But these same studies often conclude that women don’t like muscular men. Assuming “muscular men” means “guy who lifts but doesn’t do steroids,” this is reaching far beyond what the data can support. Maybe you’d look like a freak with eighty extra pounds of muscle- that doesn’t mean you wouldn’t look good with just ten more.

Choice of operational definition

You may be surprised to hear this, but science can’t measure whether a certain food is healthy, or a certain look is attractive. Not exactly. The concepts that a study wants to measure, like “health” and “attractiveness,” have to be translated into something specific and measurable. That something is called an operational definition.

For instance, if you wanted to measure how “healthy” something is, your operational definition could be all-causes mortality. That’s not bad, although it may still miss the distinction between living a long time vs not getting sick.

If you want to measure whether and how much a certain diet, workout or supplement boosts muscle growth, one way to do that is to measure protein synthesis- the rate at which a subject’s body assembles amino acids into proteins. This tends to be preferred over directly measuring muscle mass because protein synthesis can be measured far more accurately than lean body mass. The downside is that it assume protein synthesis to be a good proxy for muscle growth. It probably is, but unlike many trainers I’m not 100% convinced that something isn’t getting lost in translation there.

Back to dating examples- many studies have tried to measure women’s attractiveness to men. Some have put men and women together and watched whether the man asked the woman out. Some have used online dating profiles and measured how many messages women received. Others have shown men photos and descriptions of women, and asked them if they would hypothetically like to date her. These are three different operational definitions of attractiveness, and you can’t assume they would all get the same result.

Selective publishing of research

Finally, not all research gets published. Sometimes a study finds no results.

Sometimes the results it finds are totally in line with the existing orthodoxy, and it doesn’t get published because it’s boring. Scientists have a major perverse incentive to publish studies with surprising or unorthodox results- they know those studies get the most press.

Sometimes the researchers are biased and have a favored conclusion they’re trying to reach- on behalf of their school, think tank, or corporate backers.

Become a crusader...for science

First, discard your blind faith in “science.” Just because something was said in an article in a scientific journal, doesn’t mean it’s true. No, not even if the study was perfectly good- plenty of seemingly good studies can never be replicated. And just because something was said in a meme photo citing a magazine article that supposedly references scientific studies, really really doesn’t make it true.

Second, do your part to check sources. If people tell you something’s been proven by science, demand that they show it. If they cite an academic research paper, at least read the freaking abstract.

Third, trust the consensus of the scientific community, at least until it’s been challenged by several credible studies that have stood up to replication. Believing that all the scientists are wrong because one shaky study in an obscure journal came to an unusual conclusion

Fourth, call out misinformation whenever you see it- from friends, relatives, strangers, but most importantly when you see bad science being spread by supposed authorities in their field. Hold experts to an especially high standard of factual accuracy when they’re talking about their area of expertise.

And finally, seek out and become regular readers of bloggers who do understand science, thoroughly check their sources, and honestly communicate that information with their readers. There aren’t very many people in the fitness industry who fit the bill, as most fitness writers- even the good ones- are going more off personal experience and broscience than academic studies.

But there are a few. Menno Henselmans of Bayesian Bodybuilding takes the most scientific approach to fitness of anyone out there. Mark Sisson and the team at RobbWolf.com are usually pretty good about citing scientific evidence too.

In the dating space, there are even fewer writers who truly base their advice on sound science. Pickup artist “gurus” who make occasional cherry-picked, reductive references to evolutionary psychology are NOT practicing good science. The only site I know of that really makes an effort to ground everything in scientific research is The Mating Grounds, which seems to have gone on hiatus. Yes, that’s Tucker Max, the guy who used to write funny stories about being a drunken bully. His dating advice book for men is really good too, IMO.

The world is awash in bad science, and even worse articles about it. People spread that bad information in the misguided belief that regurgitating sciency-sounding stuff they heard represents a deep love for and understanding of science. They make health decisions based on bad science. 85% of the money spent on biomedical research is wasted on bad science- and worse yet, governments make public policy decisions based on that information.

So do your research. Do your part to fight this plague of misinformation. Be a crusader for the scientific method. And always remember that science is a liar....sometimes.